Typhoon Tip

Meteorologist-

Posts

44,171 -

Joined

-

Last visited

About Typhoon Tip

Profile Information

-

Gender

Not Telling

Recent Profile Visitors

57,422 profile views

-

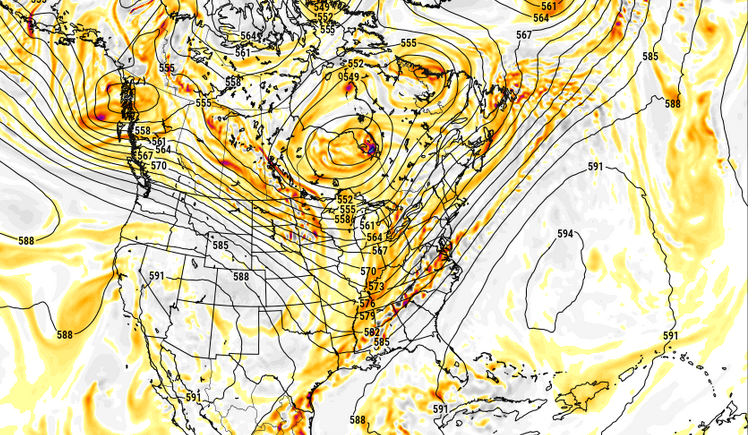

I still think there's a chance that the trough is too progressive in some of these guidance, and that a slower/attenuated total mass results in more EC parallel/quasi parallel flow - i.e., a bit of a Bahama Blue. Admittedly, that is not what this is, ... but it's not far from it considering this frame is about 60 hours in and the trough is still W of 90. My speculations won't be hurt if it doesn't realize just sayn'

-

NAM grid suggests a hot day tomorrow. 577 dm hydrostats probably means the DPs rich so that'll likely keep the T from going too crazy but you'd be talking about 93 .. 94/76 type stuff ooph

-

80 at 9:35 "10 after 10" 'll be a interesting test today. We may be 82 or 83 at this rate by the top of the hour, which if that old adage bears any usefulness ...sends us about 7 deg above MOS' around the BDL-FIT-ASH-MHT horn. Although it's probably only 76 at BDL at 10 ... 10 after 10 isn't precise either.

-

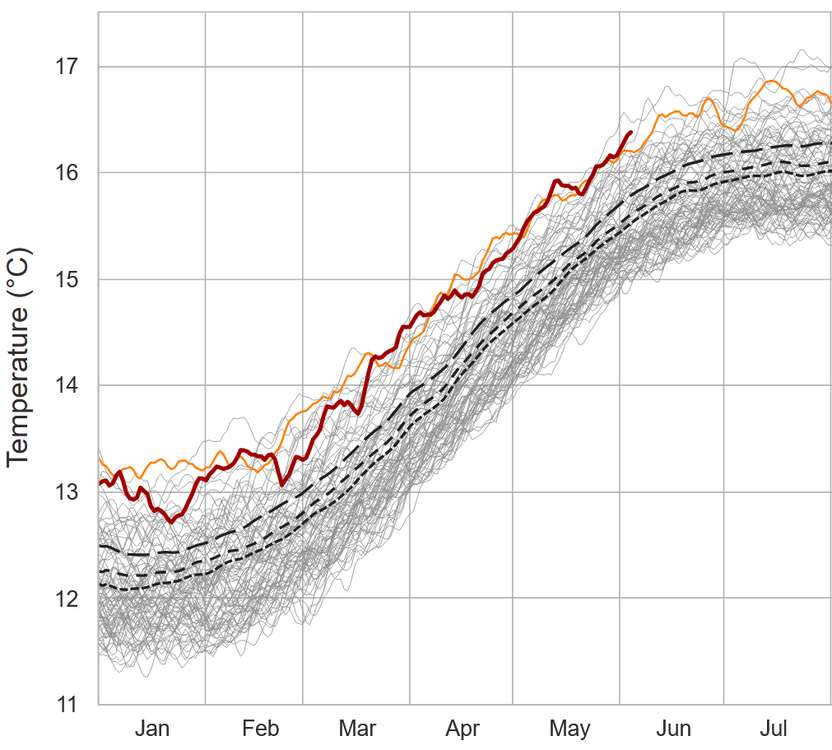

I'm speculating 16.8 to 16.9 C and a new date-relative record wrt global 2-meter mean temp by June 26th

-

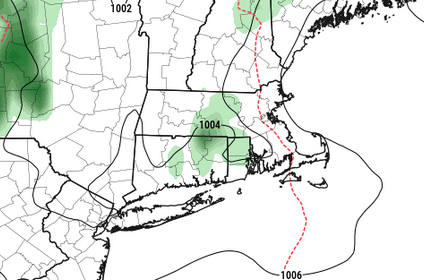

It'll be interesting to test that, the scale/extent/presence of BD over the next two days. Particularly on Friday... I see in the 00z GFS, ultra anal close-up OCD Rain Man inspection, that yeah ... there is a 'bulge' west in the PP over E-NE zones... perhaps as far W as ORH, but we're talking 1 to 2 whopping mb here really... if this is even real. 06z has this less so. I've noticed this about guidance, et al, over the last 5 to 7 years. They have improved significantly in the boundary layer where prior generations of modeling had trouble due to the termination of fields in boundary mechanics. They don't ...or couldn't really, process what is happening as the boundary - in this context, Earth - is approached. That's why they used to miss "tucks" in winter storms of lore, erode cold too fast ahead of warm fronts in general, all that cold lag winning shit. They are better at it, but ... it's like they're getting better assessment by over assessing. I see them create these kind of BD-esque looking features that don't exist, more than they ever used to... right around the same time they've all improved on BL handling in general. So... I've spent probably waaaay to much time on this subject this morning at this point, and it's probably a fool's errand considering the room is empty and no one's even reading this very sentence... hahaha. ...yeah

-

I mean they're spot on with this conceptually, and to an extent with the detailing but they're blowing it with the spatial layout - not without giving a reason why they thinking metro west and Fitchburg -Lawrence and up to Manchester are not part of the diagnostics, which they don't...? Oh, they do okay... I guess they're okay the way they handled - Confidence continues to increase that heat and humidity will pose a risk Thursday and Friday. As the warm front from Wednesday lifts further north, prolonged southwesterly flow will bring a surge of very hot and humid air, especially as 925mb temperatures increase to +27C Thursday and up to +30C Friday. Surface dewpoints are likely to top out in the upper 60s to low 70s, especially across interior MA and northern CT. These high dewpoints combined with temperatures climbing into the low to mid 90s will lead to heat index values approaching 100F Thursday and likely above 100F Friday across the CT River Valley, prompting Heat Advisories to be issued for northern CT and western MA from noon Thursday until 8PM Friday. especially away from the coastal plain. Heat Advisories may be expanded further east; however, a backdoor cold front is expected to drop into eastern MA sometime on Friday Not sure I agree synoptically..I admit to a flaccid PP but I don't see a very obvious BD mechanism, ether.

-

76 at 8:20 am isn't bad. Sat's a grungy mess though. Partly to mostly sunny for now but there's convective debris in heavier patch work lurking near-by west, inching east. The sun may alter the sounding such that some of that starts to vanquish - not uncommon - but we'll see. Heat over the next 3 days is going to be battling a bit of cloud pollution though. Most guidance 700, 500, 300 mb level RH fields are contaminated with occasional 70%.

-

just between you and me ( and the social media'sphere heh)...sometimes I'm wondering if learning AI is being used at NWS offices, and it's not quite up to the task just yet. Like it still needs a helicopter teacher. I've noticed a lot of those kind of hard to explain head scratch nuances. There's not as many in urgent more/obviously significant situations, which makes sense... these latter types are more human eye required? I could see this scenario being "unchecked" yet; in need of doing so. But I'm also a sci fi writer in another life so -

-

Advisory level headline heat tomorrow and Friday ...cloud depending. I imagine the current layout gets extended into metro west of Boston and up the rt 3 corridor toward MHT eventually. Not seeing why Greenfield MA is going to be warmer than the Framingham MA to Manchester NH axis, but we'll see

-

-

Speaking of the NAM grid.... woof. Thickness 572 to 575, first time this season basically sets in now, out thru 60 hours. 850's look like 15C. Lot of a cloud production tho so that probably limits it's already tendency for 2-meter temp retardation a bit. 30C at 980mb in NYC on Thursday tho would probably be 96 in EWR

-

I am not against, nor a fan of Judah Cohen's contributions ... I realize there's been some banter in here that's ranged from flattery to ... not so flattering opinions of the guy - I'm not part of that one way or the other. Having defined that ... I don't have a problem at all with his surmise/intimation there that it bears some semblance to last winter. I said almost exactly that to Brian in a post yesterday or the day before. Whether it ends up "comfortable" like he suggests is different combinations of metric than just temp though. He needs to be careful there ... or at least, the readers/consumers need to be aware. If it ends up normal temperatures ... that doesn't automatically intimate lower DPs to me. Not at this time of year - nor particularly to what the general synoptic trends look like out there. Particularly, if the transient trough in the Lakes starts to retrograde like the 00z ens clusters all agreed it would do... that means it is challenged to really make it COC -like. It would be more vaginal I guess... heh ( puerile humor ). It's a new-ish signal but the neg anomalies associated move back toward the S/W continent just beyond next week. For a real air cleansing... the trough needs to go the other way ... opening up and progressing E.

-

Pleezy weezy with sugar on top let's see that happen. ha

-

I realize the NAM is a dead dog model ... ...are they going to continue running it in parallel for awhile after the fact? Is there going to be gridded data sources from whatever meso madness they're going to, similar to FOUS? May be a Q/A for NWS personnel ... if there's anyone still actually working at NWS since we've gon' and made 'merica all great and all

-

yeah, there's definitely a kind of neg-head bias about signaling NE of the Maxon Dixie. Because we don't get 55 K foot tropopause stabbing nuclear updrafts with stove pipes carving canyons underneath, 'they're not worthy ...' Kidding... but there's something like a wait-and-see thing up here? I've seen more upgrades than planned scenarios - it's almost like that's their policy.